|

|

We have developed a novel vision-based scheme for driving a nonholonomic mobile robot to intercept a moving target. The proposed method has a two-level structure. On the lower level, the pan-tilt platform carrying the on-board camera is controlled so as to keep the target as close as possible to the center of the image plane. On the higher level, the relative position of the target is retrieved from its image coordinates and the camera pan-tilt angles through simple geometry, and used to compute a control law which drives the robot to the target. Various possible choices are discussed for the high-level robot controller, and the associated stability properties are rigorously analyzed. The proposed visual interception method is validated through simulations as well as experiments on the mobile robot MagellanPro.

The visual interception algorithm has been designed and developed by L. Freda and G. Oriolo (with the help of F. Capparella and M. Malagnino).

More details are given in this paper to appear in Robotics and Autonomous Systems.

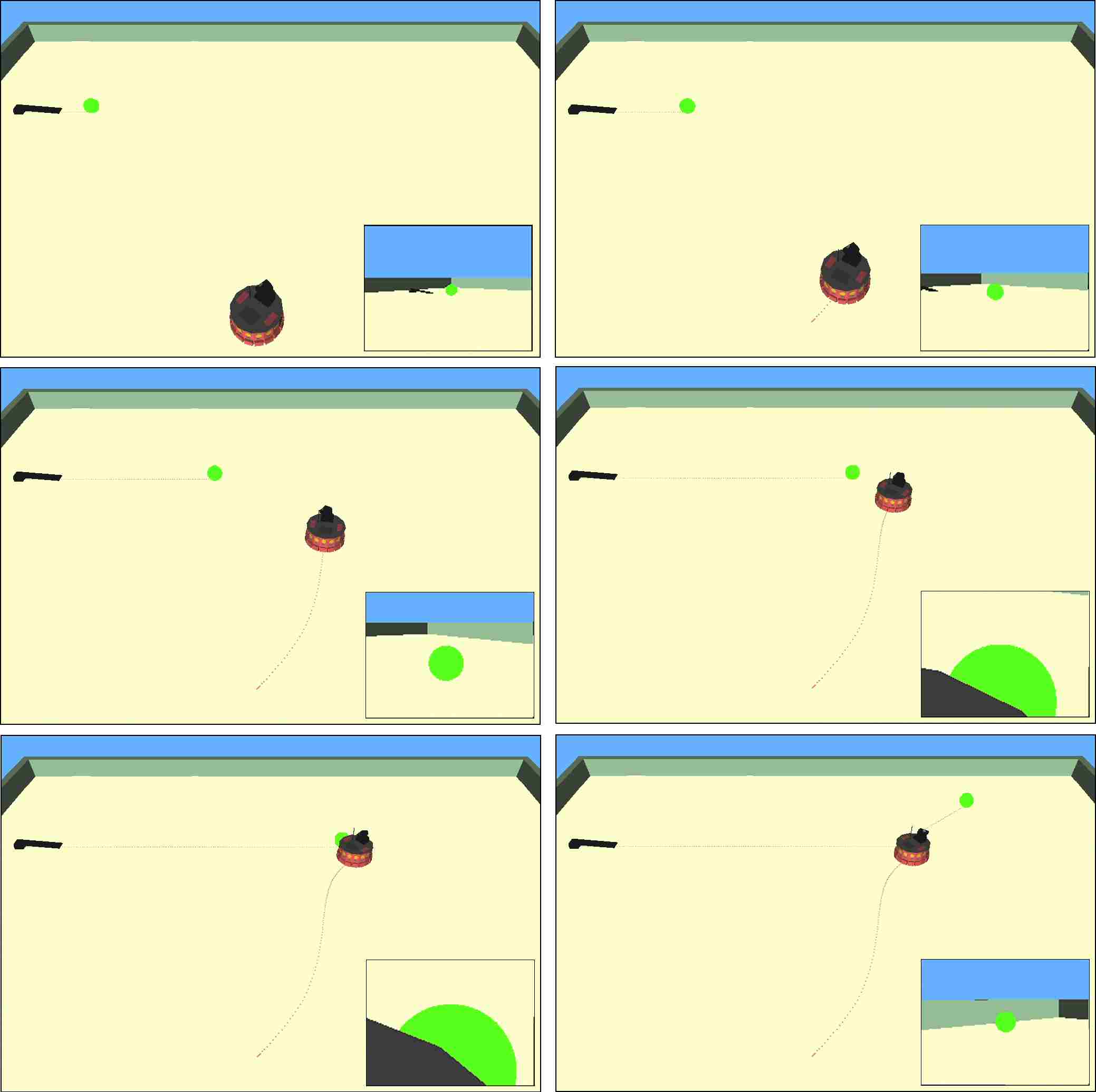

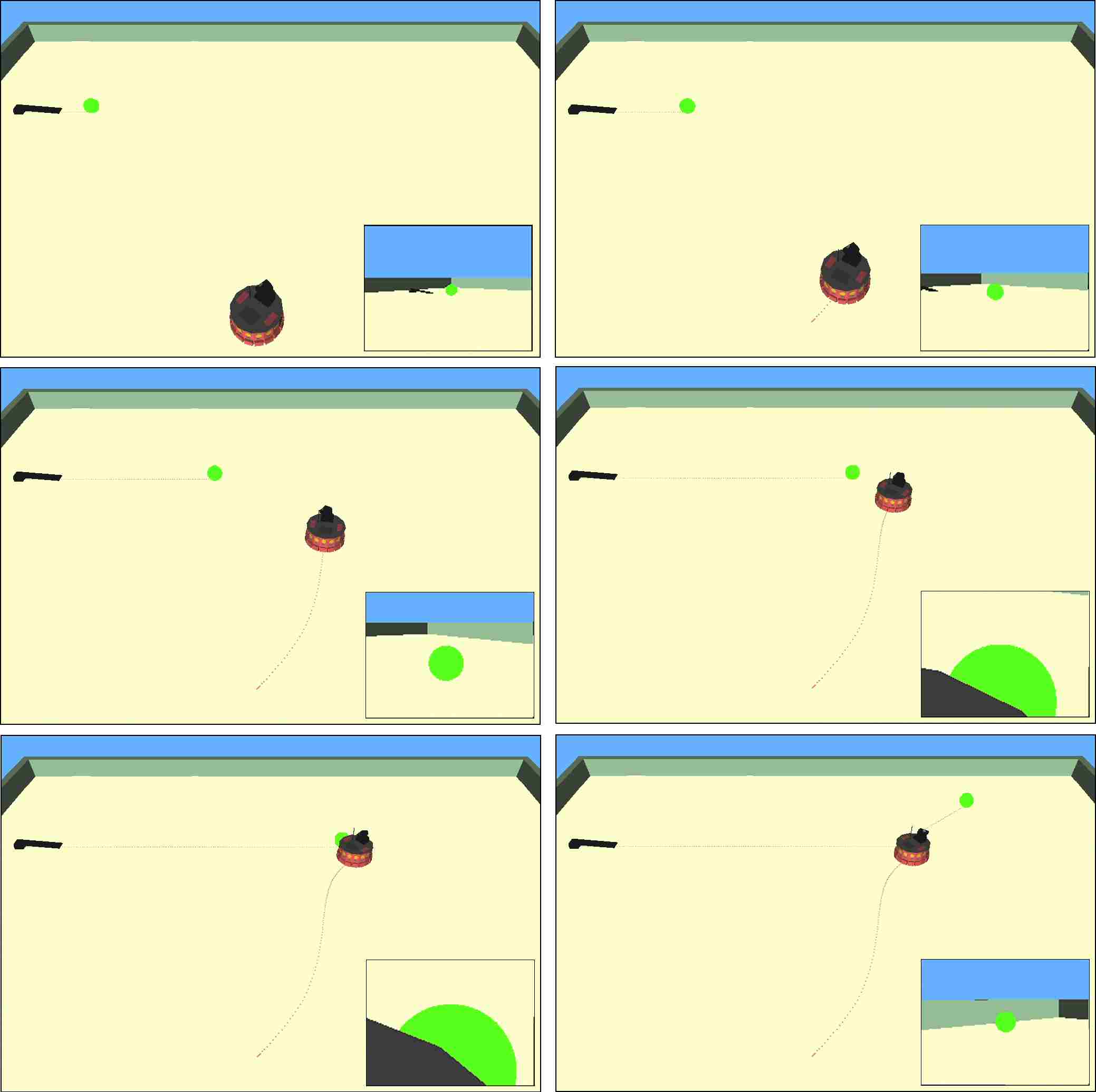

Realized within Webots, these clips show two simulations of our visual intercepting scheme with a ball moving along a line.

Simulation 1 (AVI). Note how at first the robot seeks and finds the target moving only the pan-tilt camera.

Simulation 2 (AVI)

We have implemented the visual interception algorithm on the MagellanPro mobile robot available in our laboratory.

Have a look at these two videos:

Experiment 1 (AVI) is a typical interception experiment with the ball moving along a line.

Experiment 2 (AVI) is a more challenging experiment where the ball is passed back and forth by two human players in a manner reminiscent of a common soccer training exercise.