Direct Directional Chamfer Optimization: D²CO

- Marco Imperoli and Alberto Pretto “D²CO: Fast and Robust Registration of 3D Textureless Objects Using the Directional Chamfer Distance” In Proceedings of the 10th International Conference on Computer Vision Systems (ICVS 2015), July 6-9 , 2015 Copenhagen, Denmark, pages: 316-328.

(PDF, bibtex)

In this work we proposed a fast and robust 3D object registration method. The key idea of the D²CO registration algorithm is to refine the parameters (i.e., the object pose) using a cost function that exploits the Directional Chamfer Distance (DCD) tensor in a direct way, i.e. by retrieving the costs and the derivatives directly from the tensor. Differently from other registration algorithms based on the ICP method, D²CO does not require to re-compute the point-to-point correspondences, since the data association is implicitly encoded in the DCD tensor. Further information can be found at www.dis.uniroma1.it/~labrococo/D2CO/

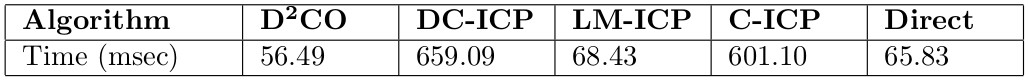

Correct registrations rate plotted against the distance (angle + translation) of the initial guess from the ground truth position (each plot is referred to a different object).

Multi-view D²CO Object Registration

The multi-view D²CO extention provides a wider basin of convergence of the algorithm, while improving the objects localization accuracy. Further information can be found at www.dis.uniroma1.it/~labrococo/D2CO/

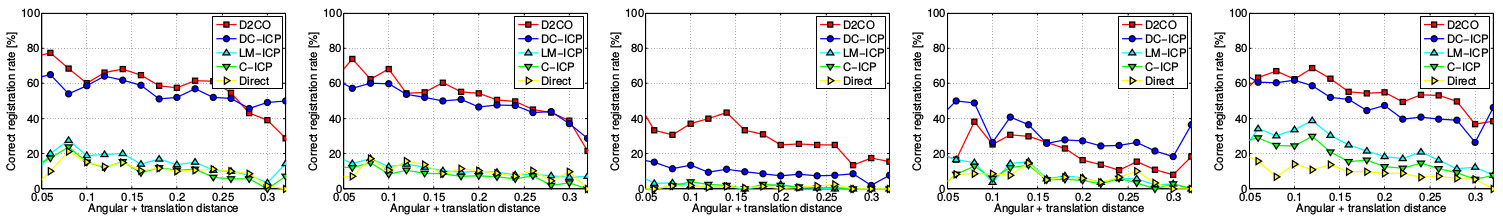

Correct registrations rate plotted against the distance (angle + translation) of the initial guess from the ground truth position (each plot is referred to a different object).

Active Object Recognition and Localization

- Marco Imperoli and Alberto Pretto “Active Detection and Localization of Textureless Objects in Cluttered Environments” In arXiv preprint arXiv:1603.07022.

(PDF, bibtex)

In this work we proposed a novel solution to the next-best-view (NBV) problem that aims to solve the detection ambiguities while maximizing the confidence and the localization accuracy. Further information can be found at www.dis.uniroma1.it/~labrococo/D2CO/

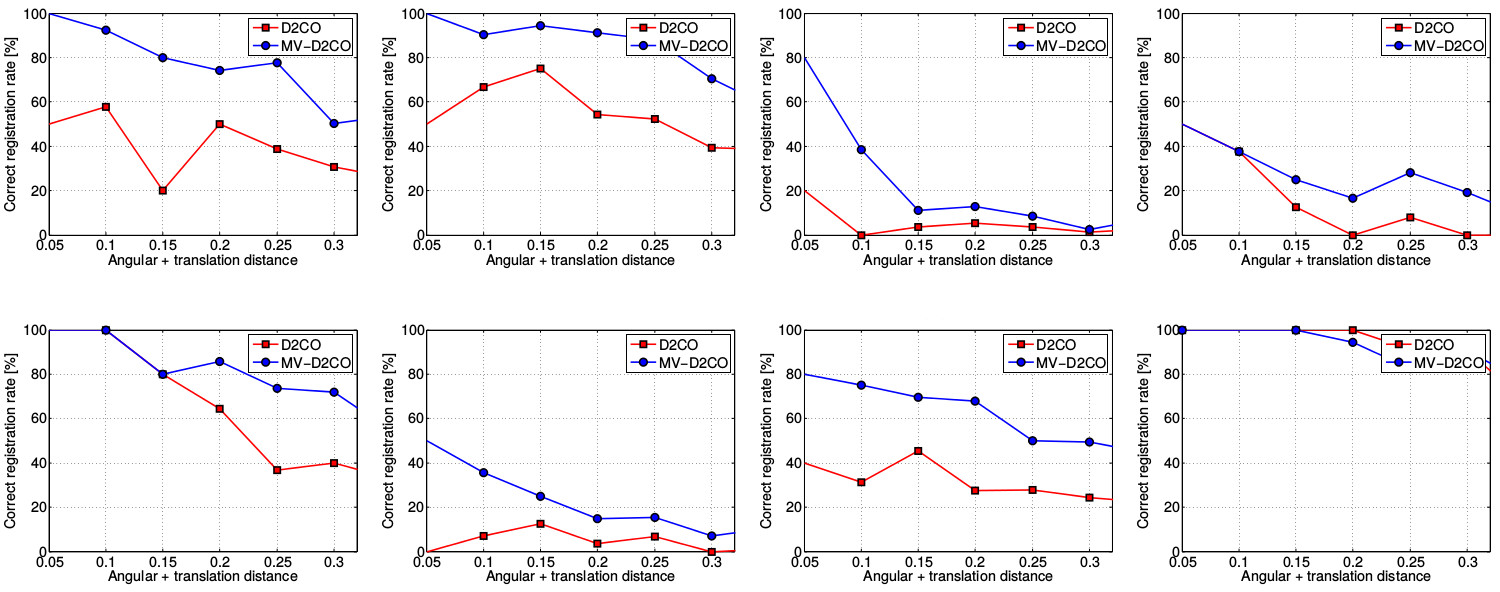

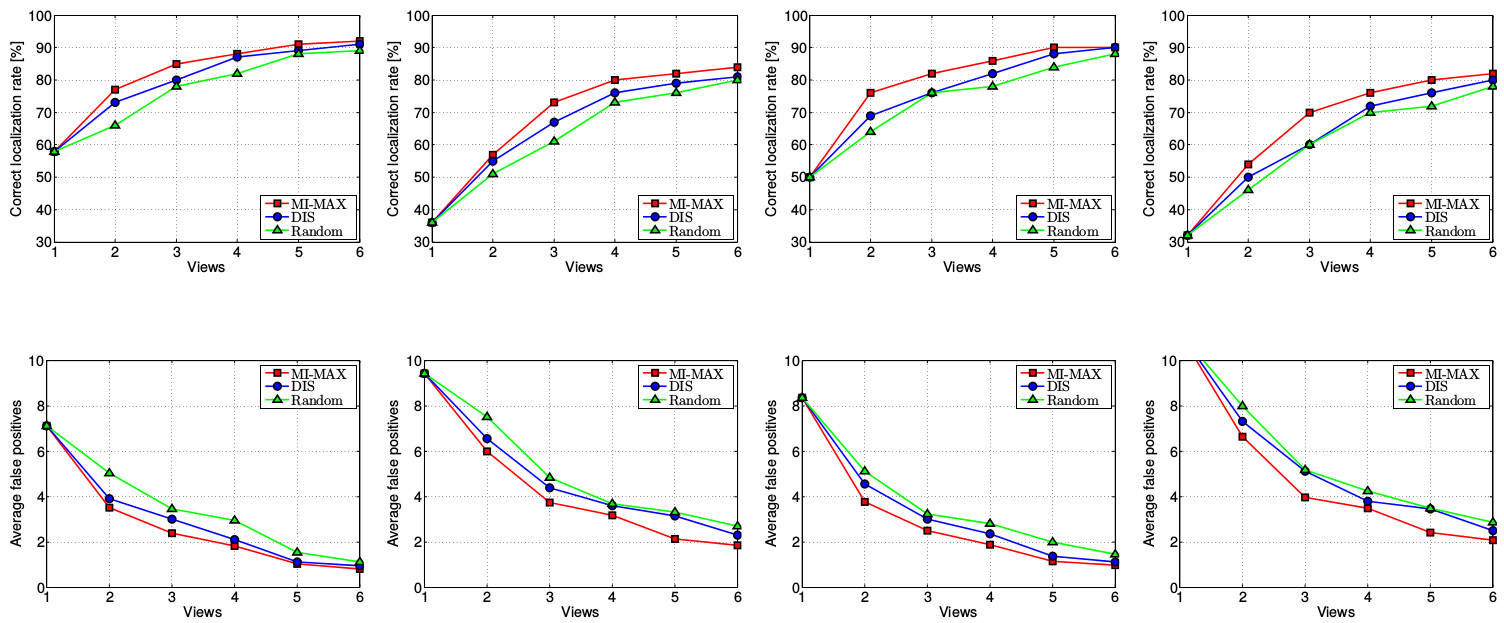

Our active perception approach (MI-MAX in red) compared with other methods.